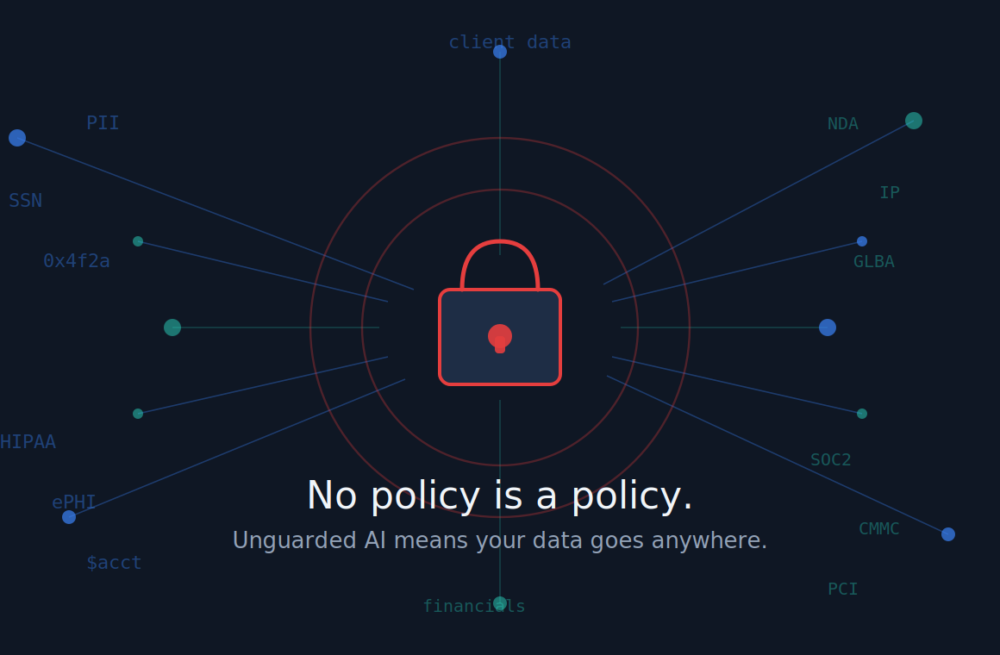

The default policy no one meant to create

If your organization hasn't established a formal AI Acceptable Use Policy, you haven't avoided making a decision — you've already made one.

And here's what that unwritten policy says: It's okay for employees to put any data into any AI tool, anywhere, at any time, for any reason.

That's not an exaggeration. That's the default.

The Policy Vacuum Problem

Every week, your employees are using AI — ChatGPT, Copilot, Gemini, Claude — to draft emails, summarize documents, analyze data, and research clients. Some of these tools were approved by IT. Many were not. And in almost every case, the data flowing into those prompts is leaving your organization's control the moment the "enter" key is pressed.

When there's no written policy, there's no rule being broken. Employees aren't being reckless; they're being efficient. They're filling a vacuum with their own best judgment — which has nothing to do with your legal obligations, compliance requirements, or risk tolerance.

The absence of a policy isn't neutrality. It's permissiveness by default.

Shadow AI Is Already Here

One of the most telling questions in the AI Risk Assessment asks: "In the past 30 days, have you discovered employees using AI tools leadership was unaware of?"

For most organizations, the honest answer isn't "No." It's "We have no way to know."

Employees don't adopt unsanctioned tools out of malice — they adopt them because they work and nothing is telling them not to. Without a policy, your data is traveling to destinations you've never reviewed, under terms of service your legal team has never read.

If your organization operates under HIPAA, GLBA, SOC 2, or CMMC, that's not just a governance gap — it's a compliance exposure. "We didn't have a policy" is not a defense. It's an admission.

Don't Wait for an Incident

Most organizations don't address AI governance until something goes wrong — a client calls about their data, an auditor asks for documentation that doesn't exist, or an employee shares something sensitive in a prompt they assumed was private.

By then, it's damage control, not risk management.

The good news: this is one of the more straightforward gaps to close. A clear, acknowledged AI Acceptable Use Policy can be built and distributed in a matter of weeks. It doesn't require a major technology investment. It requires intent — and doing it before you need it.

Start with a Risk Assessment

Not sure where your organization stands? The free AI Risk Assessment takes about five minutes and benchmarks your readiness across ten areas — including governance, shadow AI, data privacy, training, and whether you have an Acceptable Use Policy at all.

You can also join us for the AI Risk to Adoption Program℠ webinar on June 4, 2026 at 1:00 PM Eastern for a deeper look at moving from reactive to intentional on AI governance. Register here.

Your organization is already using AI. The only question is whether you're managing it — or it's managing you.

Not Sure Where You Stand?

Whether it’s AI governance, cybersecurity, or just a nagging feeling your IT isn’t pulling its weight — Darren can help you figure it out. Grab a free 15-minute call. No obligation, no sales pitch.